As cybercriminals continue to grow their weapons arsenal, utilising new methods such as voice phishing to cause further damage, it’s important that organisations step up their defences. Jonathan Miles, Head of Strategic Intelligence and Security Research, Mimecast, tells us how these attacks are conducted and offers advice to CISOs on the steps they can take to mitigate against them.

Since the dawn of social engineering, attack methodology has remained largely unchanged. But the rise in electronic communications and the departure from in-person interaction has changed this dynamic. BEC attacks rose in prominence, using email fraud to assail their targets via invoice scams and spear phishing spoof attacks to gather data for other criminal activities.

Deepfake attacks, or voice phishing attacks, are an extension of BECs and have introduced a new dimension to the attacker’s arsenal.

Social engineering attacks, commonly perpetrated through impersonation and phishing, are an effective tactic for criminal entities and threat actors and have shown a sustained increase throughout 2019. Threat actors impersonate email addresses, domains, subdomains, landing pages, websites, mobile apps and social media profiles, often in combination, to trick targets into surrendering credentials and other personal information or installing malware.

However, this methodology has been worsened by adding a new layer of duplicity: the use of deepfakes, or voice phishing, is becoming more prevalent as an additional vector used in conjunction with business email compromise (BEC) for eliciting fraudulent fund transfers.

What is a deepfake?

Deepfake, a combination of Deep Learning and fake, is a process that combines and superimposes existing images and videos onto source media to produce a fabricated end product. It is a technique that employs Machine Learning and Artificial Intelligence to create synthetic human image or voice content and is considered to be social engineering since its aim is to deceive or coerce individuals. In today’s charged global political climate, the output of a deepfake attack can also be used to create distrust, change opinion and cause reputational damage.

A Deep Learning model will be trained using a large, labelled dataset comprised of video or audio samples, until it reaches an acceptable level of accuracy. With adequate training the model will be able to synthesise a face or voice that matches the training data to a high enough degree that it will be perceived as authentic.

Many are aware of fake videos of politicians, carefully crafted to convey false messages and statements that call their integrity into question. But with companies becoming more vocal and visible on social media, and CEOs speaking out about purpose-driven brand strategies using videos and images, is there a risk that influential business leaders will provide source material for kicking off possible deepfake attacks?

BEC: The first step in voice phishing

A BEC is the campaign that follows a highly focused period of research into a target organisation. Using all available resources to examine organisational structure, threat actors can effectively identify and target employees authorised to release payments. Through impersonation of senior executives or known and trusted suppliers, attackers seek authorisation and release of payments to false accounts.

An FBI report found that BEC attacks have cost organisations worldwide more than US$26 billion between June 2016 and July of this year.

“The scam is frequently carried out when a subject compromises legitimate business or personal email accounts through social engineering or computer intrusion to conduct unauthorised transfers of funds,” according to the FBI alert.

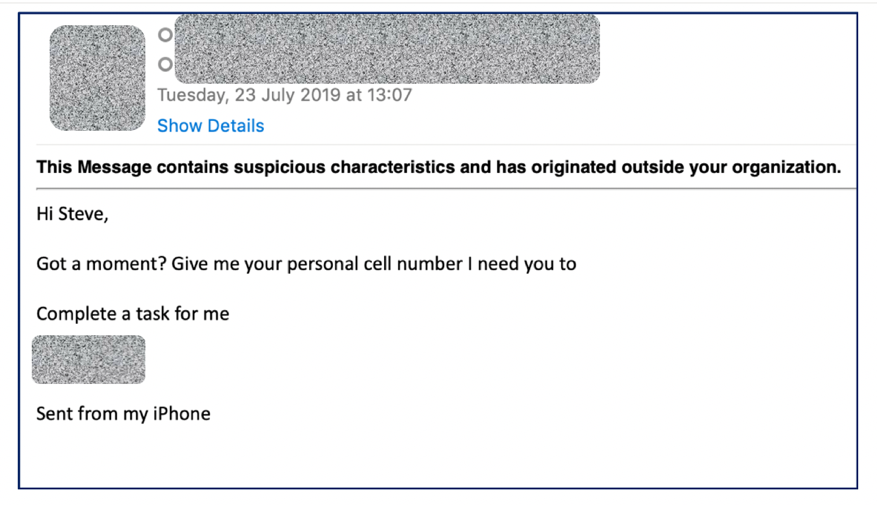

Figure 1: Possible example of pre-deepfake audio attack BEC email

In Figure 1, the request for a personal cell number indicates the possibility for the attacker to circumvent any caller ID facility in place on a company telephony network which would confirm the caller’s identity.

Figure 2: Possible example of pre-deepfake audio attack BEC email

Voice phishing enhances BEC attacks

Today, deepfake audio is used to enhance BEC attacks. Reporting has indicated that there has been a marked rise in deepfake audio attacks over the last year. But will these become more prominent as the next generation of phishing (or ‘vishing’ as in voice phishing) attacks and mature into the preferred attack vector instead of BEC?

Deepfake audio is considered as one of the most advanced forms of cyberattack through its use of AI technology. In fact, research has recently demonstrated that a convincing cloned voice can be developed with under four seconds of source audio. Within this small time frame all the distinguishable personal voice traits, such as pronounciation, tempo, intonation, pitch and resonance, necessary to create a convincing deepfake are likely to be present to feed into the algorithm. However, the more source audio and training samples, the more convincing the output.

In comparison to producing deepfake video, deepfake audio is more extensible and difficult to detect. This is according to Axios, which has stated: “Detecting audio deepfakes requires training a computer to listen for inaudible hints that the voice couldn’t have come from an actual person.”

How deepfake phishing attacks are created

Deepfake audio requires material to be created from feeding training data and sample audio into appropriate algorithms. This material can be comprised of a multitude of audio clips of the target, which are often collected from public sources such as speeches, presentations, interviews, TED talks, phone calls in public, eavesdropping and corporate videos, many of which are freely available online.

Through the use of speech synthesis, a voice model can be effortlessly created and is capable of reading out text with the same intonation, cadence and manner as the target entity. Some products even permit users to select a voice of any gender and age, rather than emulating the intended target. This methodology has the potential to allow for real-time conversation or interaction with a target, which will further hinder the detection of any nefarious activity.

Although the following examples of email audio attachments are likely not associated with deepfake methodology, potential vectors to elicit fraudulent activity or acquire user credentials cannot be dismissed. The examples provided below represent more typical and frequent types of voicemail attacks in existence.

A malicious voicemail attachment

Malicious voicemail attachment 2

Current threat landscape

With technology that allows criminal entities to collect voice samples from a myriad of open source platforms and model fake audio content, it is highly likely that there will be an increase in enhanced BEC attacks that are supplemented by deepfake audio.

In addition, as companies seek to interact more and more with their customer base through the use of social media, the barrier to acquiring source material for deepfakes will be lower. As such, leaders must remain aware of the non-conventional threats they are exposing themselves to and maintain a robust awareness training programme that evolves alongside voice phishing and a proactive threat intelligence model that takes steps to mitigate threats.

Click below to share this article